Here’s What 2027 Holds for Overwhelmed SRE Teams.

Something weird happened in 2025. AI adoption in monitoring hit 54% for the first time, and on-call toil went up. Not a little. Up to a 30% median, the first increase in five years (Catchpoint SRE Report, 2025). More tools, more toil. You’d expect it to go the other way.

And it wasn’t just toil. 92% of developers said their AI tools made deployment failures bigger when they happened (Catchpoint, 2025). More coverage, larger blast radius. The engineers fielding those incidents in 2027 are dealing with more surface area and more pressure than they were two years ago, even with better tooling in hand. Probably not what anyone planned for.

That gap between ‘more tools’ and ‘better on-call’ is what ITOC360 was built for. One platform: alert triage, scheduling, escalation, postmortems. No more hoping four different tools talk to each other correctly at 3am.

Key Takeaways

- Alert fatigue is structural, not a tooling problem: 46% of all alerts are false positives.

- Agentic AI will handle first-line incident response for most teams by 2027, but Gartner warns 40% of those projects will be canceled due to governance gaps — platforms like **ITOC360** solve this with built-in approval gates and audit trails.

- OpsGenie’s April 2027 shutdown is a forced market reset — teams that delay migration decisions will feel it.

- Blameless postmortem culture remains the biggest gap: 78% of teams run post-mortems, only 22% make them blameless.

- Human judgment isn’t going away — it’s getting narrower, higher-stakes, and more consequential.

Is Alert Fatigue Actually Getting Worse in 2027?

Yes. Worse and faster than teams can keep up with.

Average team gets 3,832 alerts a day (Vectra AI, 2025). Roughly half are false positives (Microsoft/Omdia, 2026). Most don’t need a human anywhere near them. The engineers on the receiving end figured this out a long time ago, which is actually part of the problem.

When you get paged for nothing enough times, you stop treating every page like it matters. You start working from gut feel instead of the alert. And then a real one comes through and blends in with the noise. 73% of companies traced a real outage back to an alert someone suppressed or ignored (Runframe, 2025). Not a fringe case. Most companies.

The thing nobody says out loud

It’s not the volume that breaks teams. It’s what the volume does to trust over time. Once engineers stop believing the alert system, you can’t fix that with another suppression rule. The system needs a rethink, not more tuning.

ITOC360 scores and routes alerts before they generate a page. Not silencing notifications after they fire. Stopping the noisy condition from ever becoming a page. Teams usually don’t feel how different that is until they’ve worked both ways for a month or two.

Seven in ten on-call teams put alert fatigue in their top three problems (Catchpoint, 2025). That figure hasn’t moved in two years. It’s not going to fix itself.

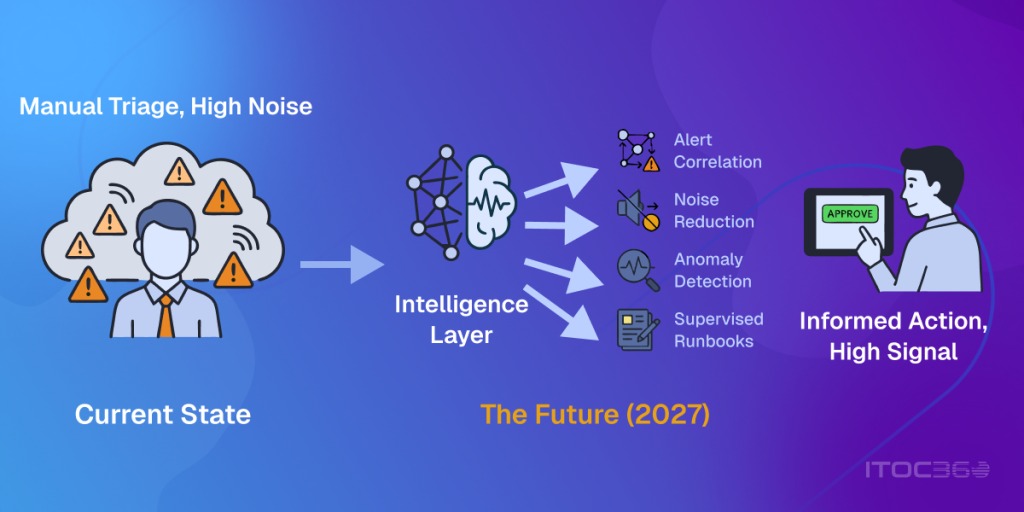

Will Agentic AI Handle First-Line Incident Response by 2027?

For most teams, probably yes. Gartner has enterprise agentic AI in IT infrastructure at under 5% today and 70% by 2029. 86% of companies say they expect to have AI agents running by 2027 (Runframe, 2025). That window is genuinely close.

And to be specific about what ‘agentic’ means here, because it gets thrown around loosely: not classification, not routing. The system takes action. Rolls back the bad deploy. Runs the runbook. Updates the status page. Drafts the war room message. All before anyone opens a laptop. On-call teams already doing this are saving roughly five hours per incident (SolarWinds, 2025). On a busy team running 15 incidents a month, that adds up fast.

But. Gartner also says 40% of agentic AI projects will be canceled before 2027 is out. Not because the technology failed. Because teams shipped automation without audit trails, without approval steps, without any way to roll back when the AI got it wrong. That’s not a speed improvement. That’s faster damage.

Adoption data is interesting here: GenAI in ops went from 33% to 65% in one year (Runframe, 2025). But 69% of AI-driven decisions still need a human to verify them before they go live (Runframe, 2025). That gap will shrink. For now, platforms that make that verification quick and leave a paper trail are worth paying for.

By the numbers

Gartner: 70% of enterprises deploying agentic AI for IT infrastructure by 2029, up from under 5% today. Also Gartner: 40% of those projects canceled by 2027 because of missing governance. Both things will be true at the same time.

How to Migrate to a Modern On-Call Platform Without the Risk

April 5, 2027 is the hard date. Atlassian stopped selling it in June 2025. A lot of teams know this and haven’t moved on it. That’s going to be a problem, because rebuilding alert routing, escalation trees, and on-call schedules is not something you do in a week before a deadline.

There’s a real upside here though, if you approach it right. Most teams built their OpsGenie setup years ago and haven’t looked at it critically since. Forced migration is a forced audit. A lot of what you find probably wasn’t serving you well anyway.

ITOC360 has a migration path specifically for this. Not swapping one feature set for another. Moving to AI-native routing, smarter rotation scheduling, and postmortem tooling that teams actually end up using. One platform instead of four held together with integrations.

What to look for in a replacement, honestly

- AI triage that works on day one: ITOC360 scores and routes before anything reaches a human

- Works with your existing stack: API-first, fits your observability and CI/CD setup without custom glue code

- SLO-based alerting: you get paged when error budget is burning, not when a threshold ticks over

- Postmortem tooling people will actually run: blameless by default, tied to the incident timeline automatically

- Multi-cloud out of the box: AWS, GCP, Azure, hybrid from a single config, not four separate integrations

Teams that pick a replacement based on OpsGenie feature parity will spend the next few years maintaining a 2019-era alert philosophy on newer infrastructure. Talk to us about switching to ITOC360

From Reactive Pages to Proactive Operations: What’s Actually Shifting

The old model was: something breaks, alert fires, engineer wakes up, engineer investigates. Repeat every few days. That model is being replaced, and the pace of the shift has surprised a lot of people. 68.4% of companies say they’re doing proactive incident management now, up from 35% four years ago (Atlassian, 2024, N=500). Nearly double.

The ROI case is pretty direct. Companies running full-stack observability have 77% fewer outages per year than those using separate monitoring tools. Not recovering faster. Just having the incident not happen.

What the new stack looks like

- AIOps triage: catches anomalies before anything breaches a threshold

- eBPF observability: kernel-level visibility, almost zero performance overhead

- SLO-based alerting: you hear about it when your error budget is actually at risk, not when CPU hits 80%

- Runbook automation: common failure patterns get handled before a human is in the loop at all

ITOC360 connects these layers instead of leaving teams to wire up separate tools and hope they talk to each other. Alerting, scheduling, runbooks, and postmortems in one place, one config.

AIOps was a $2.67 billion market in 2026, heading toward $11.8 billion by 2034 (Fortune Business Insights). Forrester expects tech leaders to triple their AIOps spend specifically to reduce technical debt (Forrester, 2025). Already showing up in budget cycles, not just roadmaps.

On-Call Burnout Is Getting Worse. AI Won’t Fix It on Its Own.

Close to 70% of SREs say on-call stress is directly burning them out (Catchpoint, 2025). Operational toil hit a 30% median in 2025, the first time it’s risen in five years. Two-thirds of engineers feel pushed to ship faster than their reliability work can keep pace with. None of these trends are improving.

Here’s the part that doesn’t show up in the press releases: more AI investment has made some of this harder. AI flags more edge cases. Surfaces more deployment risk. Creates more things that need a human to approve or escalate. Even when total incident count goes down, the cognitive weight per incident goes up. You’re dealing with fewer pages but harder ones.

What actually works

Tooling helps but doesn’t fix burnout. Teams that actually improve it do rotation design: at least 5 or 6 people, real handoff rituals, hard recovery windows after bad nights. None of that happens automatically.

ITOC360’s scheduling module distributes on-call load fairly, flags when one person is absorbing too much, and enforces recovery time after high-severity incidents. On-call health is a real metric inside ITOC360, not something you have to track in a spreadsheet separately.

78% of teams run postmortems. Only 22% run blameless ones (Atlassian, 2024). That gap has persisted for a decade despite Google’s SRE practices being publicly available. The tools aren’t the blocker. ITOC360’s postmortem module builds the blameless structure in by default: timelines pulled from system data, not memory; causes tied to conditions rather than people; action items with owners. But it still needs leaders who actually want to run it that way.

What Does an On-Call Engineer’s Day Look Like in 2027?

The 3am page isn’t gone. But it’s rare enough by 2027 that when it fires, it means something real. Most days start with a digest of what happened overnight: what fired, what the system handled automatically, what got escalated and why. It reads like a morning briefing, not a crisis list.

Some rough math

SolarWinds (2025) puts AI-assisted incident savings at about 4.87 hours per incident. A 10-person team running 15 incidents a month could drop from roughly 60 hours of incident work monthly to under 20, assuming the AI handles 65% of cases. That’s a significant chunk of capacity returned.

The job is changing. The 2027 on-call engineer isn’t the person who fixes everything. They’re the person who decides whether the AI’s proposed fix is actually right. They approve the uncertain calls. They run the postmortem. They decide where the automation is and isn’t trusted. Fewer incidents, but each one that reaches them matters more.

The financial stakes make this urgent. 91% of enterprises say an hour of downtime costs them more than $300,000. 41% put it at $1 million to $5 million per hour (ITIC, 2024, N=1,000+). At those numbers, slow incident response stops being a process problem and becomes a finance problem.

What Won’t Change in 2027, No Matter How Good the Tools Get

SLOs still need humans to agree on what reliability actually means for the business. That conversation between engineering and product about acceptable error budgets, what’s good enough for which services, can’t be automated. It’s political. It needs people who understand both sides.

Blameless postmortem culture is still mostly aspirational. 22% adoption after a decade of publicly available SRE guidance (Atlassian, 2024) says something about the gap between knowing what works and actually doing it. The tooling isn’t the bottleneck. Leadership wanting to run it that way is.

Getting response times under an hour still needs good tooling AND good process. ITOC360 covers the tooling side. The process side is yours to build.

Multi-cloud is getting more complicated, not less. 88% of teams run hybrid or multi-cloud environments (Microsoft, 2024), and major cloud security incidents jumped from 24% to 61% in a single year (SentinelOne, 2026). The surface area is expanding. ITOC360’s routing engine normalizes alerts across AWS, GCP, Azure, and on-prem from a single config layer, so at least the alerting setup isn’t adding to that complexity.

The teams that do well in 2027 invest in both things: automation with real governance, and cultural practices no platform installs for you. ITOC360 covers the first part.

Frequently Asked Questions

What is on-call incident management in 2027?

By 2027, a lot of the first-line response is handled by AI before a human is involved at all. Engineers deal with escalations that actually need judgment: things the system hasn’t seen before, calls where the AI isn’t confident, postmortem facilitation. The 3am page still happens. It just matters more when it does. ITOC360 is built for this model, where the predictable work is automated and engineers deal with everything that isn’t.

Will AI replace on-call engineers by 2027?

No, though the job looks different. AI handles the known stuff. What’s left for humans is harder: novel incidents, uncertain remediation calls, postmortems, SLO conversations with product. Gartner sees 70% enterprise agentic deployment by 2029 and also says 40% of those projects will be canceled without governance. The engineer’s job shifts toward overseeing and setting limits on the automation, less running from fire to fire.

What should teams look for when migrating from a legacy On-Call tool?”

Don’t pick a replacement based on OpsGenie feature parity. Pick based on what you actually need in 2027: AI triage that works without heavy configuration, SLO-based alerting instead of threshold noise, postmortem tooling that teams will actually run, and multi-cloud routing from a single config. ITOC360 was built around those criteria and has a migration path that avoids rebuilding everything from scratch.

How do you reduce alert fatigue in on-call incident management?

You have to fix it upstream, not just at the notification layer. That means scoring and routing alerts with AI before they fire a page, replacing threshold-based alerting with SLO-based alerting, and doing regular audits to retire alerts nobody acts on. Silencing pages after they fire just hides the problem. ITOC360 handles all three layers.

What is the average MTTR for high-performing teams in 2027?

Under an hour. That benchmark hasn’t shifted much. Low-performing teams are still over 24 hours. Agentic automation closes some of the gap but not all of it. You also need practiced process under pressure. ITOC360 handles the automation side. The runbook and postmortem modules help build the process side over time.

Is ITOC360 suitable for teams of all sizes?

Yes. Works for a small team on a lean rotation and for a large engineering org with multi-cloud infrastructure and multi-tier escalation. Pricing is based on rotation size, so you pay for what you actually use.

Where to Start

OpsGenie’s deadline is fixed. Agentic projects without governance are already being shut down. And blameless postmortem culture takes quarters to build, not sprints. The teams that will be in a decent position by 2027 are the ones who started working on this in 2026.

One concrete first step: audit your alert stack. If 46% or more of your alerts are false positives, which is roughly the industry average right now, adding AI on top of that just speeds up the wrong answers. Get the signal-to-noise ratio right first, then automate.

The goal isn’t a smaller team. It’s your best engineers working on the problems that actually need them, instead of triaging noise at 2am.

Get Started with ITOC360

ITOC360: AI triage that stops the noise before it pages anyone. Agentic escalation with audit trails and approval gates. Scheduling that protects your team from burning out. Postmortem workflows that actually get run. And a migration path from OpsGenie that doesn’t require rebuilding everything from scratch.

Sources

- Atlassian. (2024). State of Incident Management Report (N=500).

- Atlassian. OpsGenie Sunset Announcement.

- Catchpoint. (2025). SRE Report 2025.

- Fortune Business Insights. AIOps Market Size and Forecast.

- Forrester Research. (2025). Tech Leaders and AIOps Adoption Forecast.

- Gartner. (2025). Agentic AI in IT Infrastructure Operations Forecast.

- Google. Site Reliability Engineering Book.

- ITIC. (2024). Hourly Cost of Downtime Survey (N=1,000+).

- Microsoft / Omdia. (2026). State of the SOC Report.

- Microsoft. (2024). Hybrid and Multi-Cloud Adoption Research.

- Runframe. (2025). Incident Management and AI Operations Report.

- SentinelOne. (2026). Cloud Security Incidents Research.

- Vectra AI. (2025). Security Alert Volume Research.